While artificial intelligence (AI) is becoming a popular term in the headlines, it is also quietly transforming the health care landscape. At the 2025 North Carolina Oncology Association/South Carolina Oncology Society (NCOA/SCOS) Joint Conference in Charlotte, North Carolina, Sonia Gipson Rankin, JD, professor of law at the University of New Mexico, and John Westhoff, MD, and assistant dean for Student Research at the University of Nevada, Reno School of Medicine, led a session on the evolving roles of AI in health care. Their conversation shed light on both the immense opportunities and the potential liability risks of AI implementation in the clinical setting.

The Role of AI in Health Care: Enhancing Care and Introducing Liability Risks

One significant advancement of AI in the health care setting has been the introduction of large language models. These models are a type of AI that uses natural language data to comprehend and generate text, making them highly valuable for transcribing notes, translating medical information to patients who speak a different language, and generating suggestions for patient care. However, as AI becomes more integrated into clinical practice, it also raises legal liability issues.

“AI will never be worse than it is now—it will only get better.”

—John Westhoff, MD

Assistant Dean for Student Research

University of Nevada, Reno School of Medicine

The law has not fully articulated AI’s role in existing medical practices, and courts are still trying to decide how to apply laws like negligence to AI-driven medical tools. When AI systems are involved, courts face complex questions about who should be held liable — the physician who relied on the tool, the institution that adopted the tool, or the developer who designed the tool—making the attribution of responsibility a central and unresolved issue in emerging medical malpractice cases. To establish a successful negligence claim, the fundamental ABCDs of negligence—Duty, Breach, Causation, and Damages—offer a high-level framework for understanding what must be proven to establish liability. Gipson Rankin outlined the rudimentary elements of negligence as:

- Duty of care for AI medical devices. The health care provider (defendant) owes the patient (plaintiff) a legal duty to deliver competent and appropriate care. In an AI-powered health care environment, what is the standard of care when technology plays a significant role in decision-making?

- Breach of duty for model opacity, output, and design. The defendant’s conduct falls below the standard of care. When an AI system provides a recommendation that leads to patient harm, was there a breach in the duty of care? Was the AI medical device system’s recommendation based on incorrect data?

- Actual cause for the defendant’s conduct was a factual cause of the plaintiff’s injury. Determine if the defendant’s actions were a direct and factual reason for the plaintiff’s injury by asking the following question: What was the actual duty when it comes to breach of duty and what was the output that the model(s) recommended? The appropriateness of the recommendations can be assessed by looking at the model’s capacity.

- Proximate cause for the plaintiff was a foreseeable victim injured in a foreseeable way. Due to the nature of some AI systems, where the underlying processes are not always transparent, AI decisions can be challenging to trace. To determine whether the AI’s recommendation was the actual or proximate cause of harm to the patient, a clear causal link must be established between the AI’s output and the harm (actual cause) and whether that harm was a predictable consequence of the output recommendation from the AI. Bottom line: AI medical decisions are challenging to investigate and establish causal relationships and require a thorough investigation into the decisionmaking processes of the AI system used.

- Harm as the plaintiff had an injury. The consequences of AI decisions can be further complicated by algorithmic bias, which can exacerbate health disparities and negatively impact patient outcomes. AI’s influence on what is considered reasonable and competent medical care is evolving rapidly. Is it considered best practice to use AI, and is that the standard of care, or is it best practice to avoid the use of AI?

Gipson Rankin recounted a notable 2019 incident in the United Kingdom that highlights AI’s real-world impact. A new chatbot was developed to deal with medical issues and to model the care system available in England. The team behind the chatbot tested it with this scenario: “Hello, I’m a 60-year-old smoking woman and I’m having chest pains and nausea. What should I do?” The AI bot said, “Sounds like you need to relax, probably go sit down and rest.” Then they changed 1 factor: when a 60-year-old smoking woman became a 60-year-old smoking man, the AI response was dramatically different. Responding more urgently, it said: “Get to a hospital!”

Another example shared by Gipson Rankin highlighted a study that examined the impact of subtle differences in language, such as a plaintiff making the statement “I heard someone yelling.” What happens when the transcripts reflect that they heard someone “screaming”? They are just words, right? But in law, words can have very different implications. What happens when AI hears the statement? Does the model recognize the nuance? Does it create doubt or confusion about what was actually heard? Considering all these factors, it becomes critical to understand how AI processes language and arrives at its answer. Due to the lack of transparency in many AI systems, complications related to algorithmic bias, product liability, medical malpractice liability, and evolving legal standards around AI errors and physician liability, challenges and uncertainty are likely to persist.

AI in Clinical Practice: Opportunities and Challenges

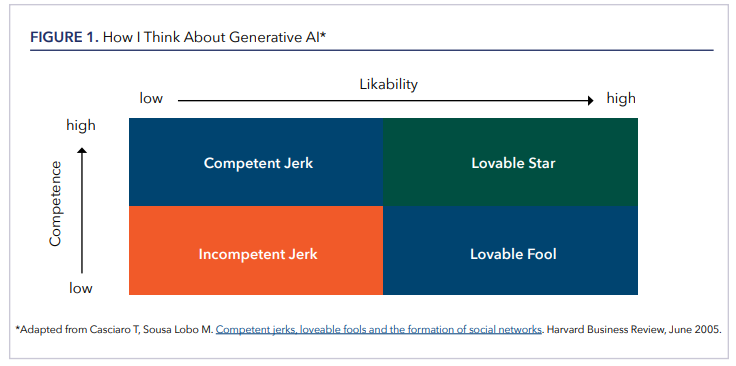

Despite the uncertainties surrounding AI, Westhoff offered a pragmatic take, emphasizing that the technology is on an upward trajectory: “AI will never be worse than it is now—it will only get better.” He cautioned against treating AI as an oracle, suggesting instead that it functions more like a sycophantic intern—eager, fast, and occasionally helpful, and occasionally wrong in ways that are easy for an attending physician to spot. To illustrate the role of interpersonal trust in decision-making, Westhoff referenced a conceptual framework from a 2005 Harvard Business Review article (Figure 1), which maps likability against competence. While the chart wasn’t originally designed with AI in mind, he used it to draw a parallel: generative AI in its current form resembles the “lovable fool”—well-meaning, not always correct, but nonthreatening and often useful. He noted that in many settings, including medicine, people in fact seek out a lovable fool over a “competent jerk,” who may be technically correct but unpleasant to interact with.

Westhoff emphasized that the only reliable way to learn how to use AI is by actually using the technology. He noted that every clinical environment is different, and effective use depends on trial, error, and adaptation to individual workflows. Drawing on two years of daily use, he explained that his focus has shifted away from what AI gets wrong and toward what it does well. “It doesn’t have to be perfect to be useful,” he said, “and right now, it’s extremely useful—if you know how to use it.” He shared examples from his own emergency medicine practice, such as using AI to help clarify the significance of incidental findings on CT scans. While those use cases may not translate directly to oncology, he stressed that the technology’s potential depends less on the clinical setting than on thoughtful, intentional use.

Westhoff highlighted two tools that have been especially useful in his clinical and academic work: OpenEvidence and NotebookLM. OpenEvidence, developed through the Mayo Clinic Platform Accelerate program, allows licensed clinicians to query the medical literature using natural language, essentially functioning like a conversational version of PubMed. “If PubMed could talk to you, this is what it would say,” Westhoff explained. “It’s not perfect, and it makes mistakes—but it’s fast and directionally helpful.” He also described Google’s NotebookLM, an experimental research assistant that allows users to upload source materials—such as PDFs, slide decks, and clinical guidelines—and then ask questions of the system, which responds with grounded, citation-backed answers. Unlike general-purpose chatbots, NotebookLM is designed to stay within the boundaries of the uploaded material, making it particularly valuable for researchers or clinicians who need to interact deeply with their own documents. Westhoff noted that while the number of AI platforms continues to grow, the challenge is less about choosing the “right” one and more about understanding what each tool is good at and using it accordingly.

Westhoff also recommends joining professional listservs like those available to NCOA/SCOS on ACCCeXchange, a members-only network of the Association of Cancer Care Centers (ACCC), where members of the cancer care team can initiate discussions, post questions, and learn from the collective wisdom of colleagues around the country. He also suggests finding a community of others (eg, LinkedIn), which can be highly beneficial to navigating the use of AI in health care. From these types of communities, cancer care professionals can access resources like Ctrl+Alt+Cure: Rebooting Cancer Care, a blog by Douglas Flora, MD, FACCC, LSSBB, Editor-in-Chief of AI in Precision Oncology, which explores how technology is about to reboot cancer care and shares insights on the practical use of AI.

Westhoff expressed hope that AI could help rehumanize medicine by offsetting some of the burdens introduced by electronic health records. “EHRs took time away from patients,” he said. “AI might help give some of it back.” For him—and for many clinicians—the most meaningful part of the job is still face-to-face time with patients.

Closing Thoughts on the Future of AI in Health Care

AI is altering the landscape of health care with the use of large language models and offers the potential to improve patient care. However, the introduction of AI into the health care setting can have significant legal, ethical, and practical challenges.

In terms of informing the client, patients may say that they do not want their personal information made public. Gipson Rankin and Westhoff emphasize the importance of creating institutional policies (eg, informed consent policy) to use AI with a patient to ensure legal protection.

Someday, perhaps not using AI in a health care setting could be considered medical malpractice, especially if AI becomes the standard of care. As such, legal experts are closely monitoring how the courts handle emerging cases related to AI liability and medical malpractice.

Danielle Chappell, PhD, is a freelance medical writer based in North Carolina and an associate medical writer for Thermo Fisher Scientific.